Assignment #2 - Developing an Evaluation Rubric By Wendy Blancher, Jane Christensen and Kris Sward March 13, 2014

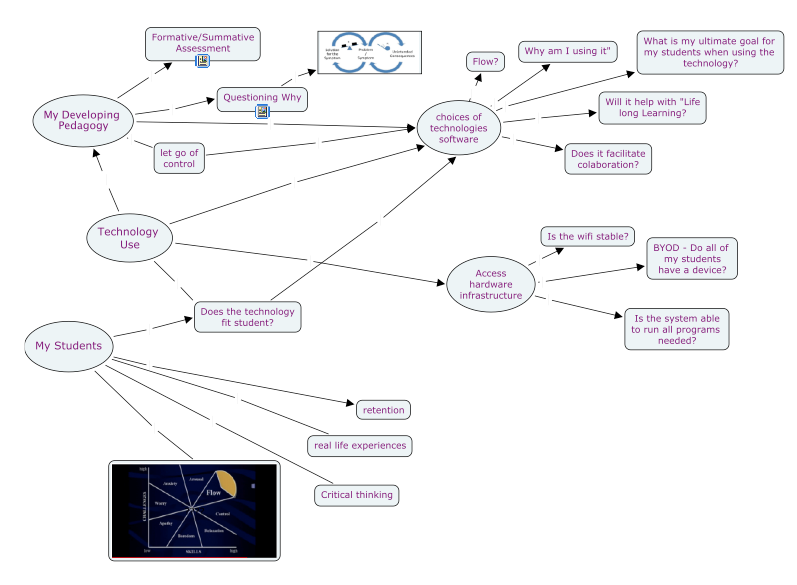

i) The overall driving force for us when choosing an app was threefold. First, we felt that it had to fit with what we were teaching and serve the purpose we had set out to use it for. This was important because we felt that an app could be used in many ways, but also many apps could be used in the same way.The app that we chose to use in our classrooms had to fit our needs as teachers and the need of the lesson we were using it for. Second, we felt that the app had to be easy to use. If 15 students are lined up to get help with how to use the app it wasn’t really going to help further their learning. In that way, students with limited to moderate experience using mobile devices should be able to choose the app and get to work with little or no guidance from their teacher (it should be intuitive). Finally, we felt that cost was a factor. Many schools have a variety of devices - not just one - and to purchase the same app for 10 or 20 devices can be prohibitive once cost comes into play. Apple does offer a Volume Purchasing Plan (VPP) for schools and corporations which can cut the price of apps in half for purchases of 15 or more copies, however, price must be considered when looking at using apps in the classroom.

Other things that we felt were important to consider when choosing an app to use for learning were whether students are prompted or can go through tutorials in order to help them understand how to use the app. Whether progress reports are available and can be shared can also be important to teachers as a way of tracking student progress. Being able to differentiate for specific students is also valuable in an app - having students start at a level that is appropriate for them and then be able to move through learning activities relevant to their needs, or simply being able to use simpler controls or make the levels increasingly hard or easy makes the app more valuable when used across an entire class or school. We have had experience with a number of apps that would simply crash or not work fluidly and thought that it was important that these glitches were avoided to make the most out of student learning time with mobile devices. All of these considerations (and more!) are represented in various parts of our rubric.

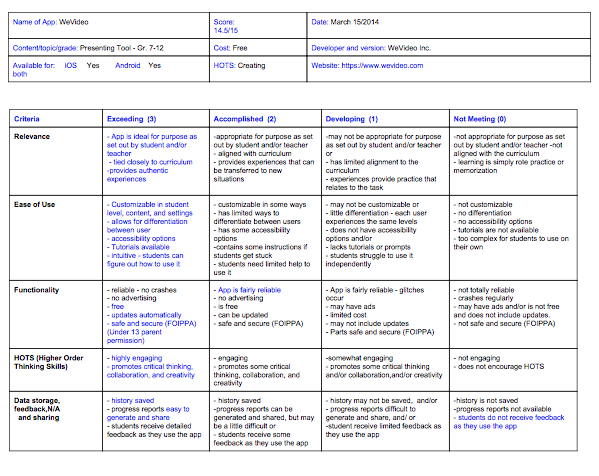

ii) The rubric we developed is attached.

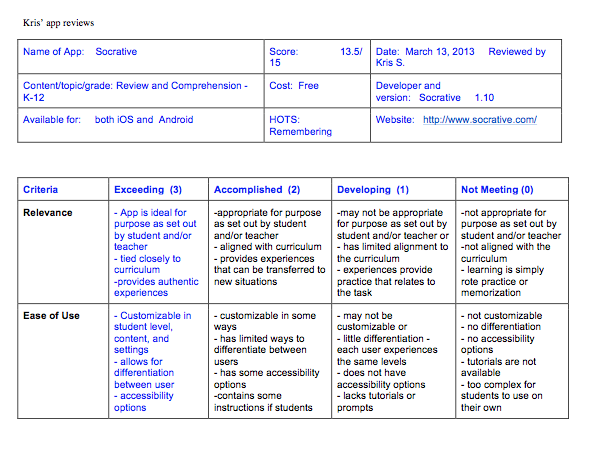

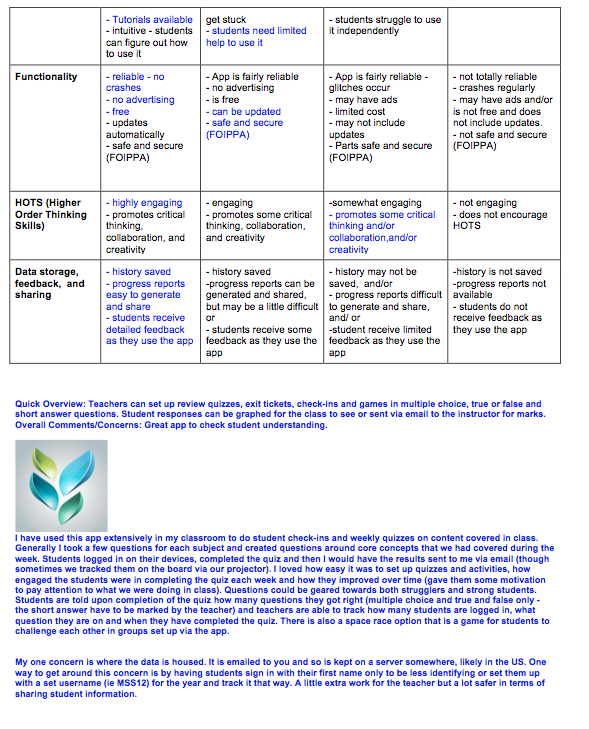

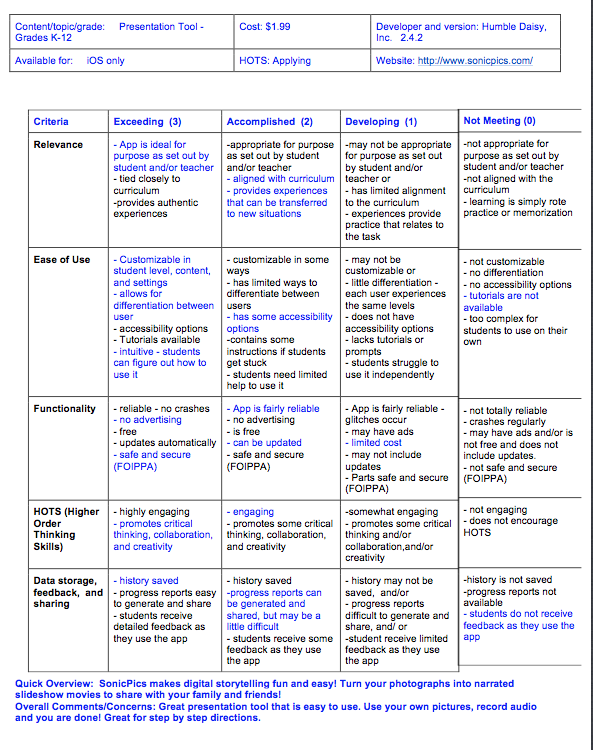

iii) In looking through a variety of rubrics, the group was fairly well aligned with the categories we chose for our rubric. We chose Relevance, Ease of Use, Functionality, HOTS, and Data Storage, Feedback and Sharing. Initially we had more categories (10, I think) and decided to group some together and pare them down in order to keep it to one page and make it more user friendly. Here is an overview of why we chose the categories we did:

a) Relevance - we used the Relevance category to distinguish between apps that were tied to the curriculum and suited a specific purpose to those that were simply fun practice of simple tasks or basic skills. The last spectrum measures how relevant the experiences the app provides are to students lives and how well the skills they learn in the app can be transferred to other tasks.

b) Ease of Use - This is where we took into account how easy it was for a student with moderate experience using a mobile device to pick up the app and start using it. This includes whether the app has an intuitive interface and whether tutorials or prompts are used to move the student along. This is also where we tracked the ability to set levels and content based on different users.

c) Functionality - We grouped a number of items under Functionality as they all speak to how well the app works for students. First, we wanted to provide an idea of how stable the app was and if it crashed or glitched a lot during student use. This can be a game changer in whether or not we employ the app in our classroom and so wanted to recognize it’s importance. We also felt that advertisements take away from the learning experience. Often ads flash or move or have some sort of way to gain the user’s attention and we wanted as few distractions as possible to ensure consistent student engagement when using the app. Price is a factor, as stated above, and so we thought we could measure that here. We’ve also included a section on how the app is updated and whether it happens automatically or needs to be attached to the parent computer in order to do so. Finally, we had concerns about how and what data were stored and where. Some apps require users to set up an account that involves sharing email addresses and personal information. Other apps simply allow users to log in via a username. We recognize the importance of FOIPPA concerns and wanted to reflect that in our rubric.

d) HOTS - in this we were hoping to measure student engagement and the use of their Higher Order Thinking Skills (Remembering, Understanding, Applying, Analyzing, Evaluating and Creating).

e) Data storage, feedback and sharing - we felt that all aspect of this category were important but that they were somewhat related. We wanted to ensure that data was kept for each user profile and that the apps were persistent - or the users progress was maintained between logins. We also felt that students needed to have feedback in how they were doing, and that teachers had a way of tracking student progress by being emailed results or having access to data collected by the app.

Part II The apps that we have chosen to review are as follows.

Higher Order Thinking Skill App Reviewed By:

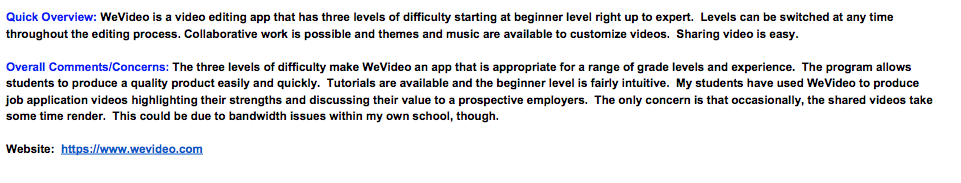

Creating Wevideo/Comiclife Wendy

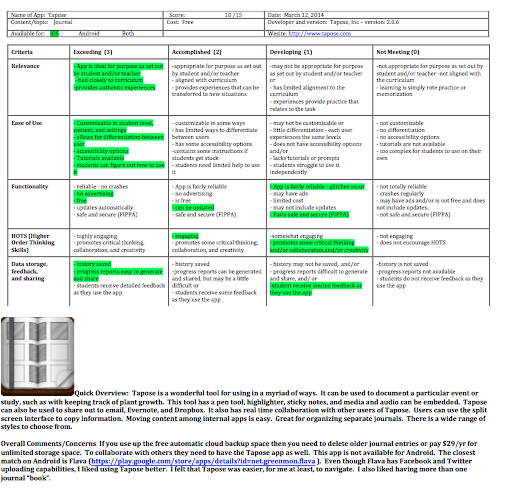

Evaluating Tapose Jane

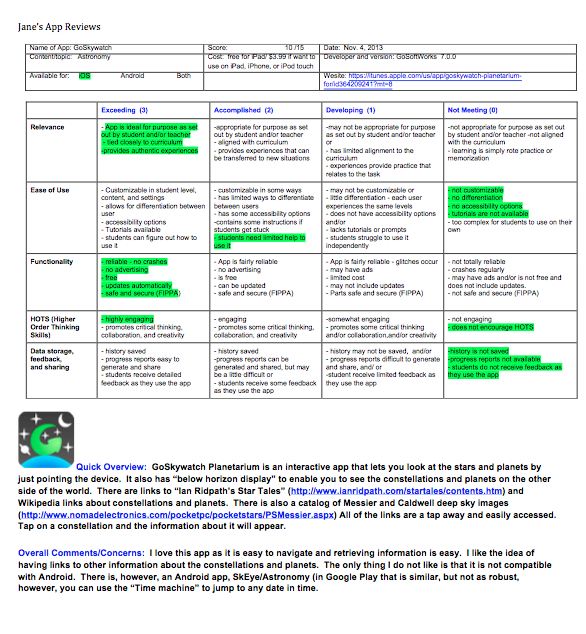

Analyzing GoSkywatch Jane

Applying Sonic Pics Kris

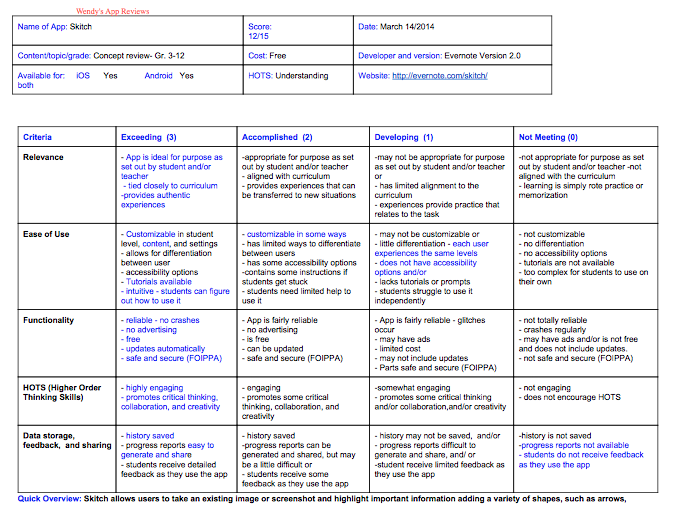

Understanding Skitch Wendy

Remembering Socrative Kris

RSS Feed

RSS Feed